Welcome to the Research and Strategy Services at in today's fast-paced.

Confidence is often treated as a proxy for performance. When individuals appear confident, their decisions are assumed to be accurate. When confidence falters, performance is assumed to decline.

Under conditions of uncertainty, this relationship breaks down.

This article explains why confidence and accuracy frequently diverge when predictive reliability is reduced, and why this divergence is a structural feature of uncertain environments rather than a flaw in judgment or self-awareness.

In stable environments, confidence serves an important role. As learning consolidates and prediction error decreases, confidence tends to align with accuracy.

This alignment depends on:

When these conditions hold, confidence becomes a meaningful calibration signal.

Here, confidence refers to an individual’s subjective sense of decision reliability, not to assertiveness, risk tolerance, or general self-belief. Its relevance lies in how accurately it reflects underlying decision quality.

Under uncertainty, the informational conditions that support confidence calibration weaken.

When feedback is delayed, incomplete, or unreliable:

As a result, confidence is no longer anchored to performance in a stable way.

In uncertain environments, individuals may remain confident even when outcomes deteriorate.

This does not necessarily reflect overconfidence or denial. Instead, it often reflects:

When prediction error cannot be resolved, confidence may persist by necessity rather than by bias.

The opposite pattern is also common. Individuals may experience reduced confidence even when decisions are correct.

Without reliable confirmation:

This can lead to hesitation or overcorrection, not because decisions are poor, but because calibration signals are weak.

The divergence between confidence and accuracy under uncertainty is not random. It reflects the inability of predictive models to converge when informational structure is unstable.

In these conditions:

This decoupling is a hallmark of cognitive performance under uncertainty.

Confidence instability is often attributed to:

While these factors may coexist, they are not required to explain the observed patterns. Reduced predictive reliability alone is sufficient to produce confidence–accuracy divergence.

When confidence fluctuates independently of performance, it should not be assumed that individuals lack insight or competence.

Instead, confidence variability may reflect rational responses to environments where outcomes fail to provide clear calibration signals.

Recognizing this distinction prevents misdiagnosis of performance issues and avoids inappropriate corrective strategies.

Confidence–accuracy decoupling is a direct consequence of uncertainty. When prediction cannot reliably stabilize, confidence loses its role as a dependable indicator of decision quality.

This pattern reflects broader principles of Cognitive Performance Under Uncertainty, where informational instability—not motivation or effort—drives changes in learning, judgment, and subjective certainty.

Under uncertainty, confidence is not a reliable guide to accuracy. Its instability reflects the structure of the environment rather than the quality of cognition.

Understanding this distinction allows performance to be interpreted more accurately in settings where reliable feedback is unavailable.

Welcome to the Research and Strategy Services at in today's fast-paced.

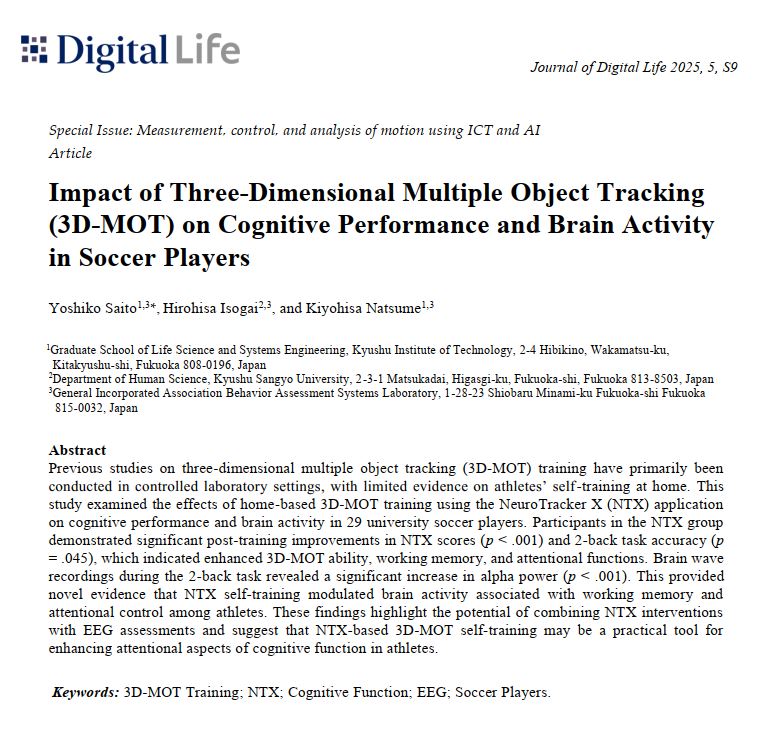

Watch our recent NeuroTracker webinar with Mick Clegg, former Manchester United Power Development Coach

Sometimes the action is clear, but the consequences are not. This article explores how hesitation often comes from uncertainty about what happens next—not uncertainty about the action itself.

Some things can happen directly in front of you and still go unnoticed. This article explains how attention filters the environment, shaping what enters awareness and what gets missed.

.png)